After playing with some more probabilistic data structures and talking about Count-Min Sketch, I wanted to expand on my previous work with the Pwned Passwords data set. This is quite an interesting step up from a Bloom Filter and also allows us to query out information about the frequency of a password.

Sketchy

The blog post on Count-Min Sketch is a good starting point if you're not familiar with what they are and how they work, but here I'm going to be focusing on what you can do with them. A CMS is a great data structure to store frequency information on events in a stream and in this case we're going to be using a CMS to store frequency information about passwords in the Pwned Passwords data set.

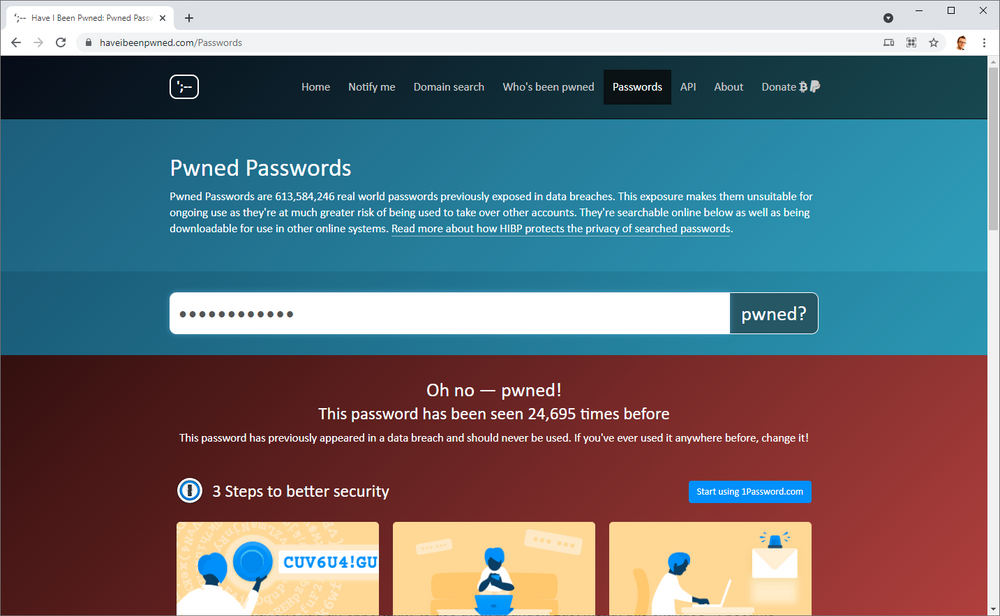

Pwned Passwords

The Pwned Passwords collection is curated by Troy Hunt and you can query against a list of 613,584,246 real world passwords leaked in data breaches with the goal of preventing users of your service from choosing poor passwords. The API is simple and provides strong privacy guarantees whilst being pretty fast to query against. The Pwned Passwords data set is also available to download, coming down in its smallest version as an 11GB compressed file that expands out to 25.1 GB. That's a pretty big file to keep locally and query against, and querying against the API comes with network latency, so let's see if we can improve things beyond my previous efforts using a Bloom Filter, When Pwned Passwords Bloom, using a Count-Min Sketch!

Setting up the Count-Min Sketch

For full details on how all of this works you need to refer to the Count-Min Sketch explainer post I wrote, but I'm going to assume a basic level of understanding here. When we initialise the CMS you can do so by specifying the desired width w and depth d, or, by specifying your desired error tolerance ϵ and your desired probability to be in tolerance 𝛿, from which we can calculate w and d.

w = ceil(2/ϵ)

d = ceil(log2𝛿-1)

I'm going to be trying to keep a small error tolerance and a low probability that we will exceed the tolerance.

ϵ = 0.000001

𝛿 = 0.001

Estimating the dimensions of the array, that means the sketch will contain 604m counters.

2/ϵ ⋅ log2𝛿-1 = 604,000,000

The Redis Bloom module uses 32-bit integers so we can estimate the size of the CMS at ~2.416GB.

32 * 604,000,000 = 19,328,000,000bits = 2.416GB

That's ~10% of the size of the original data which is quite a good saving, but let's see if we hit the target in reality. The final thing we can calculate is how large our error tolerance will be as we know the exact amount of items m that will be placed into the CMS.

Pr(~fx <= fx + ϵm) <= 1 - 𝛿

Again, for a full explanation of this you will have to refer back to the detailed CMS post, but we can calculate that the estimated frequency the CMS gives us (~fx) will be between the true frequency (fx) plus our error probability (ϵ) multiplied by the number of items in the sketch (m). Let's assume a password like herpaderp, with a true frequency of 501, and run the numbers.

~fx <= fx + ϵm

~fx <= 501 + 0.000001 * 613,584,246

~fx <= 1,115

The CMS will give us an estimated frequency for herpaderp between 501 and 1,115, 99.9% of the time. That seems like quite a large margin, and it is at 123% overestimated, but there's a little more to this. Let's try the same again with the most frequent password in the data set, 123456 of course, which has a true frequency of 24,230,577!

~fx <= fx + ϵm

~fx <= 24,230,577 + 0.000001 * 613,584,246

~fx <= 24,231,191

The CMS will now give us an estimated frequency for 123456 between 24,230,577 and 24,231,191, which is only 0.002% overestimated.

Elephant vs. Mouse

The phrase 'elephant flow' simply refers to an item with a high frequency in your data stream and 'mouse flow' refers to an item with a low frequency. One of the problems that we have with a CMS is that they are less accurate for mouse flows compared to elephant flows.

This is because, as I mentioned above, the estimated frequency the CMS gives us (~fx) will be between the true frequency (fx) plus our error probability (ϵ) multiplied by the number of items in the sketch (m).

~fx <= fx + ϵm

Given that we know ϵ = 0.000001 and that m = 613,584,246, we can then say the following..

~fx <= fx + 614

If we substitute in the frequencies for our passwords above, it all becomes clear.

~f("lolwat") <= 501 + 614

~f("123456") <= 24,230,577 + 614

If you're querying a CMS and the frequency of the item comes back as ~fx <= ϵm, then it's entirely possible the true frequency was 0! What you do with those items is up to you, but it's definitely worth knowing.

Building the CMS

To build the CMS we simply need to iterate over each line in the Pwned Passwords data and increment the count for each password by the appropriate amount. Creating a CMS for use like this is a one-time operation and until the Pwned Passwords data set changes, the CMS will not need to be regenerated.

<?php

use Palicao\PhpRebloom\CountMinSketch;

use Palicao\PhpRebloom\Pair;

use Palicao\PhpRebloom\RedisClient;

use Palicao\PhpRebloom\RedisConnectionParams;

require __DIR__ . '/vendor/autoload.php';

$e = 0.000001;

$d = 0.001;

$countMinSketch = new CountMinSketch(

new RedisClient(

new Redis(),

new RedisConnectionParams('127.0.0.1', 6379)

)

);

$start = microtime(true);

$before = memory_get_usage();

$countMinSketch->initByProbability('pwned-sketch', $e, $d);

$after = memory_get_usage();

echo ($after - $before) . "\n";

$count = 0;

foreach (importData() as $data) {

$countMinSketch->incrementBy('pwned-sketch', new Pair($data[0], (int)$data[1]));

$count++;

echo $data[0] . ':' . $data[1] . ' ' . $count . "\n";

}

$end = microtime(true);

echo 'Execution time: ' . ($end - $start) / 60 . 'm';

function importData(): Generator {

$fh = fopen('input.txt', 'r');

while(!feof($fh)) {

yield explode(':', trim(fgets($fh)));

}

fclose($fh);

}As you can see I used the ϵ = 0.000001 and 𝛿 = 0.001 values from above to initialise the CMS and then iterated over the Pwned Passwords data to increment the count for each password. The total creation time was almost 11 hours, very similar to the Bloom Filter, and as with the Bloom Filter I'm not overly concerned about improving this as it's a one-time event to generate the CMS.

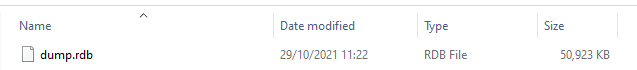

Saving the sketch

Using the SAVE command in Redis I created a snapshot containing the CMS for easy transport, sharing and backup.

redis-cli

127.0.0.1:6379> SAVE

OKWhat I wasn't expecting though was how small it was... Uh oh?

Debugging the size

The output containing the CMS is only ~51MB in size! Let's double check in Redis and see what it has to say...

scott@Gaming-Rig:/mnt/c/Users/scott/Documents/GitHub/sketchy-pwned-passwords$ redis-cli

127.0.0.1:6379> DEBUG OBJECT pwned-sketch

Value at:0x7fb646a52d70 refcount:1 encoding:raw serializedlength:52144266 lru:8113601 lru_seconds_idle:574Yep, the serializedlength is in Bytes and 52144266 is ~52MB. Digging a little deeper we can use the INFO command to get more details.

scott@Gaming-Rig:/mnt/c/Users/scott/Documents/GitHub/sketchy-pwned-passwords$ redis-cli

127.0.0.1:6379> CMS.INFO pwned-sketch

1) width

2) (integer) 2000000

3) depth

4) (integer) 10

5) count

6) (integer) 3650716681Here was and see the dimensions of the array are slightly different to what I expected at w = 2,000,000 and d = 10 meaning the size of our array should be:

32 * 20,000,000 = 640000000bits = 80MB

It turns out the difference in expected dimensions comes from how the redisbloom module calculates dimensions when you initialise based on probabilities and this will alter how far out from the true frequency our estimated frequency will be by quite some amount.

w = ceil(2/ϵ)

d = ceil(log(𝛿)/log(0.5))

void CMS_DimFromProb(double error, double delta, size_t *width, size_t *depth) {

assert(error > 0 && error < 1);

assert(delta > 0 && delta < 1);

*width = ceil(2 / error);

*depth = ceil(log10f(delta) / log10f(0.5));

}To make sure all of the Pwned Passwords data had been properly inserted into the CMS I decided to check the count provided by the INFO command above.

<?php

$total = 0;

$count = 0;

foreach (importData() as $data) {

$count++;

$total += (int)$data[1];

echo $data[0] . ':' . $data[1] . ' count:' . $count . ' total:' . $total . "\n";

}

function importData(): Generator {

$fh = fopen('input.txt', 'r');

while(!feof($fh)) {

yield explode(':', trim(fgets($fh)));

}

fclose($fh);

}That gave me the following.

scott@Gaming-Rig:/mnt/c/Users/scott/Documents/GitHub/sketchy-pwned-passwords$php count.php

...

FFFFFFFEE791CBAC0F6305CAF0CEE06BBE131160:2 count:613584246 total:3650716681There are 613,584,246 items in the Pwned Passwords data so the count is accurate and their total number of occurrences comes to 3,650,716,681 which is exactly the same as the 3,650,716,681 count that the INFO command gave me from the CMS above! There's no data missing from the CMS, it's definitely just been created at a different size. Let's try again by initialising the CMS with our own dimensions and see how that affects things.

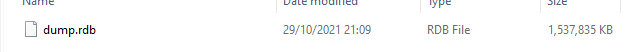

<?php

use Palicao\PhpRebloom\CountMinSketch;

use Palicao\PhpRebloom\Pair;

use Palicao\PhpRebloom\RedisClient;

use Palicao\PhpRebloom\RedisConnectionParams;

require __DIR__ . '/vendor/autoload.php';

$w = 2000000;

$d = 302;

$countMinSketch = new CountMinSketch(

new RedisClient(

new Redis(),

new RedisConnectionParams('127.0.0.1', 6379)

)

);

$start = microtime(true);

$before = memory_get_usage();

$countMinSketch->initByDimensions('pwned-sketch', $w, $d);

$after = memory_get_usage();

echo ($after - $before) . "\n";

$count = 0;

foreach (importData() as $data) {

$countMinSketch->incrementBy('pwned-sketch', new Pair($data[0], (int)$data[1]));

$count++;

echo $data[0] . ':' . $data[1] . ' ' . $count . "\n";

}

$end = microtime(true);

echo 'Execution time: ' . ($end - $start) / 60 . 'm';

function importData(): Generator {

$fh = fopen('input.txt', 'r');

while(!feof($fh)) {

yield explode(':', trim(fgets($fh)));

}

fclose($fh);

}Things looked a lot more promising during the process of generating the CMS and the total time was again almost 11 hours, but this time, all of the information looks much better.

scott@Gaming-Rig:/mnt/c/Users/scott/Documents/GitHub/sketchy-pwned-passwords$ redis-cli

127.0.0.1:6379> DEBUG OBJECT pwned-sketch

Value at:0x7fb646a52db0 refcount:1 encoding:raw serializedlength:1574742617 lru:8147635 lru_seconds_idle:488

(5.57s)scott@Gaming-Rig:/mnt/c/Users/scott/Documents/GitHub/sketchy-pwned-passwords$ redis-cli

127.0.0.1:6379> CMS.INFO pwned-sketch

1) width

2) (integer) 2000000

3) depth

4) (integer) 302

5) count

6) (integer) 3650716681

Querying the CMS

The final step is to now query the Count-Min Sketch and see what kind of counts we're getting from it compared to the actual counts.

<?php

use Palicao\PhpRebloom\CountMinSketch;

use Palicao\PhpRebloom\RedisClient;

use Palicao\PhpRebloom\RedisConnectionParams;

require __DIR__ . '/vendor/autoload.php';

$countMinSketch = new CountMinSketch(

new RedisClient(

new Redis(),

new RedisConnectionParams('127.0.0.1', 6379)

)

);

$candidates = [

'password1234' => 24695, // pwned

'troyhuntsucks' => 0, // not pwned

'hunter1' => 149080, // pwned

'scotthelmerules' => 0, // not pwned

'chucknorris' => 3501, // pwned

];

foreach ($candidates as $candidate => $actualCount) {

$start = microtime(true);

$pair = $countMinSketch->query('pwned-sketch', strtoupper(sha1($candidate)));

$end = microtime(true);

echo $pair[0]->getItem() . ' estimate:' . $pair[0]->getValue() . ' vs. actual:' . $actualCount . ' in ' . ($end - $start) . "ms.\n";

}As I did with the Bloom Filter, I've selected some candidates but this time I've updated my candidate array to include the actual count of the password from the Pwned Passwords data set. We can now query the candidates against the CMS and see what the estimated count from the CMS is and what the actual count from the Pwned Passwords data was.

scott@Gaming-Rig:/mnt/c/Users/scott/Documents/GitHub/sketchy-pwned-passwords$ php query.php

E6B6AFBD6D76BB5D2041542D7D2E3FAC5BB05593 estimate:25464 vs. actual:24695 in 0.0090880393981934ms.

A6B072F6AAB5D9DB2521C69AACF2E0F27057A202 estimate:809 vs. actual:0 in 0.00015902519226074ms.

25AFF7F4B1BB747833F5175789A1998B31CA4ED4 estimate:149881 vs. actual:149080 in 0.00014591217041016ms.

162DFB16EA77666800F359C6001765BD4E64D1F1 estimate:788 vs. actual:0 in 0.00014305114746094ms.

8E0FA56E12D5EF883092EAF5979B53F0B2CAC0A2 estimate:4215 vs. actual:3501 in 0.00014591217041016ms.The counts coming back are really quite accurate considering how small the CMS is and we're almost within the predictions I made above even though the filter is ~1GB smaller than estimated too. It seems the error margin is 700-800 from the true count and for the mouse flows, that represents quite a problem, but for the elephant flows, it's not really a problem at all. It certainly gives you the ability to look at a password and see if it's got no count/small count vs. a very large count, quite reliably.

Querying the API

You may take a slightly different approach to querying the API when using the CMS compared to in the previous post on the Bloom Filter, say only querying an item if the count is <800 to see if it is really a 0 count item. You could also perhaps come up with some slightly higher margin for your cut off to query back to the API and then just outright reject everything above that.

Using the same API timing data from my last post, with an average query time of 49ms for the API, and the average CMS query time here of 0.00193638801574708ms, that's still ~25,300x slower to query the API than the CMS, but, the CMS also takes 13x longer to query than the bloom filter from the last post! That Bloom Filter really is fast.

Admittedly, a CMS doesn't really seem like the best fit for the Pwned Passwords data. It's great to be able to extract the count somewhat reliably, but it also feels like the vast majority of use cases will be based simply on the password being present in the data set and not necessarily on the count, making the Bloom Filter solution the best option. Still though, it was an interesting exercise!

Links

Here are the links for the code and files used above: